Ethics are a growing concern in digital products, particularly for UX designers, developers, and product managers.

Dark patterns continue to be pervasive and insidious. It’s our responsibility to identify and avoid these patterns in our own work.

However, there’s a specific type of dark pattern that I don’t think we’ve clearly labelled and identified, despite its prevalence in the digital ecosystem today.

I’m speaking of the Possibility Gap.

The Possibility Gap

The Possibility Gap is a dark pattern that arises when a product takes advantage of unknown unknowns on the part of their users, as it relates to their understanding of what is possible in digital products today.

Unknown unknowns are a concept initially expressed by Donald Rumsfeld, rooted in Johari Windows.

“There are known knowns. These are things we know that we know. There are known unknowns. That is to say, there are things that we know we don’t know. But there are also unknown unknowns. There are things we don’t know we don’t know.” —Donald Rumsfeld

To be clear, unknown unknowns in themselves are not dark patterns. They’re not inherently negative. We all have essentially infinite unknown unknowns, and part of the joy of curiosity is discovering previously unknown unknowns. When this happens, they become known unknowns.

Known Unknowns

Most users have known unknowns. In these situations, we can lean on what we do know to identify when something might be possible, even if we’re not sure exactly how.

When you submit your credit card information to a website, you know that your data is being shared over a network, and potentially being stored somewhere. With that knowledge comes the understanding that it may be possible for it to be intercepted or stolen.

If a device that’s connected to the internet has a microphone, you know that it may be possible that the microphone could be activated and “someone could be listening.” With that knowledge comes the unease that many of us experience around always-on devices like Alexa and Nest Hub.

When we have known unknowns, we’re capable of making educated decisions around how we choose to adopt and use products in our lives.

However, all users also have unknown unknowns.

Unknown Unknowns

When users have unknown unknowns, they have massive gaps between “what is possible,” and their concept of “what might be possible.” There’s a Possibility Gap.

It’s like the blind spot in our vision (apologies to those living with vision issues—I couldn’t think of a more apt metaphor).

For most of our lives, we live under the assumption that our field of vision is complete. We think we can see everything in front of us. It’s only after someone tells us that we have a blind spot—and how to find it—that it even occurs to us that a blind spot in our vision could exist.

Unknown unknowns can be dangerous, because they leave us exposed—even when we think we’re on our guard.

When a company knows that their activities or technology may fall into the realm of unknown unknowns for their users, they have a choice to make.

The Possibility Gap is a Choice

If a company chooses to create products or carry out activities that take advantage of unknown unknowns, they’re taking advantage of the Possibility Gap—regardless of their motivations.

Sometimes the Possibility Gaps can be relatively benign.

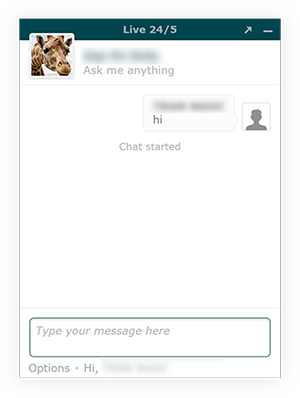

Take, for example, customer support chat apps that you find on most websites. These apps lean on existing mental models of how digital chat apps work: you write your message, hit send, and it’s sent to the agent.

However, many of these apps let agents view what you’re writing in real time, before you ever hit enter.

For most users, this possibility is an unknown unknown: why would they have any reason to suspect that the interface they’re using is behaving differently than the interface itself suggests it behaves?

Possibility Gaps can quickly become deeply concerning and invasive.

Recently, the Spanish soccer league, LaLiga, was found to be using its app to record audio to identify soccer games, and use phone geolocation to identify bars streaming games without a license.

The official mobile app of the Spanish soccer league would record audio to identify soccer games, and use the geolocation of the phone to locate which bars were streaming soccer games without licenses. The app was downloaded more than 10 million times, turning fans into narcs. https://t.co/iuSTfTopPM

— @mikko (@mikko) June 13, 2019

Without realising it, more than 10 million fans were being turned into “narcs” for the league. For users, the possibility that their phone could be used to identify and report pirated streams likely falls into the realm of unknown unknowns.

Meanwhile, Edin Jusupovic just noticed that Facebook is embedding tracking data directly into the photos you download from Facebook.

#facebook is embedding tracking data inside photos you download.

— Edin Jusupovic (@oasace) July 11, 2019

I noticed a structural abnormality when looking at a hex dump of an image file from an unknown origin only to discover it contained what I now understand is an IPTC special instruction. Shocking level of tracking.. pic.twitter.com/WC1u7Zh5gN

This has worrying implications around what Facebook is capable of tracking through something as inconspicuous as an image downloaded from Facebook.

If you upload a photo with embedded tracking information, and another person downloads that photo, their downloaded image still has that tracking information embedded in the image itself.

Suppose that that person then shares it with another person, who then uploads it to something that Facebook can crawl—such as Reddit or Instagram. By examining the embedded tracking information, Facebook can identify the original source of the photo and the potential interpersonal connections and friendship circles implied by the sharing of photos between individuals—even when some of those individuals may not even have a Facebook account.

Even those deeply embedded in digital product design and development would likely consider this possibility to be in the realm of unknown unknowns.

We Need to Take Responsibility

If you are working in the digital ecosystem today, you need to take responsibility for identifying and avoiding Possibility Gaps in your products. It’s time to label it and treat it as the dark pattern that it is.

The first step is education. If you know your users may have an unknown unknown, your responsibility is to educate them, so that you can shift your activities from the realm of unknown unknowns into the realm of known unknowns.

If you feel like you can’t educate your users to close the Possibility Gaps that exist in your products because you think that your users wouldn’t be happy should they find out, then you need to treat it as the glaring, flashing red alarm signal that it is.

Let’s work together to close the Possibility Gap.